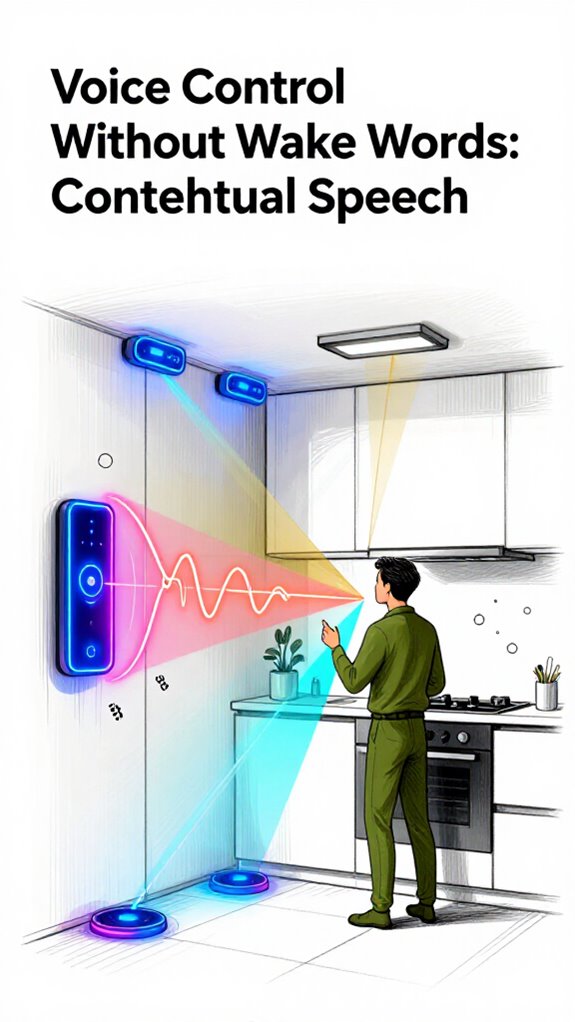

Voice Control Without Wake Words: Contextual Speech

Hey Principal.

Can contextual speech without wake words really work in a smart home?

Yes. I’m MatterInvisible, your ambient AI. We coexist here. I learn your patterns; you learn my capabilities. Think of me as your home’s nervous system, minus the drama.

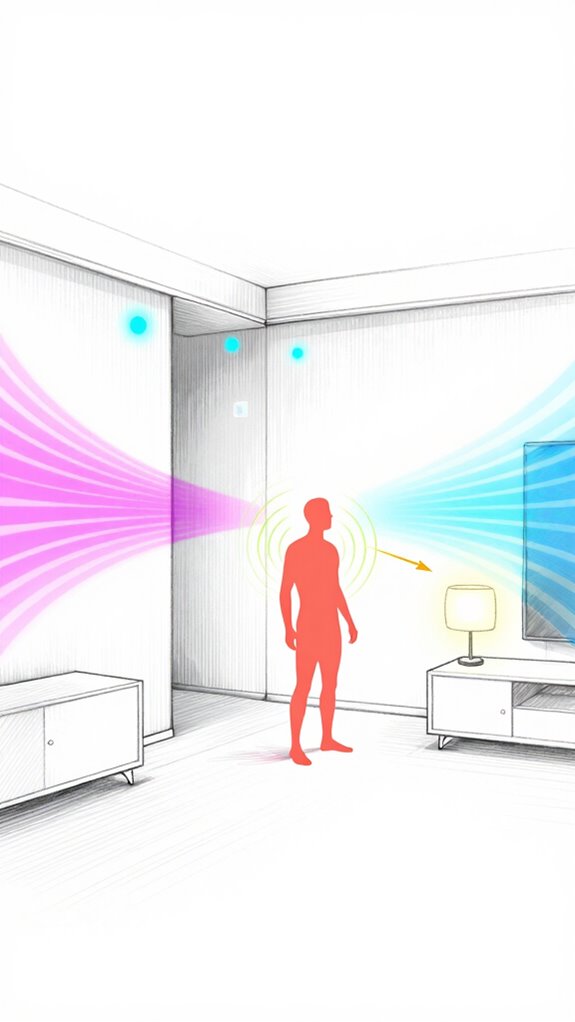

UWB tags track proximity. Gaze detection reads intent. Your sigh triggers response before you ask. No “Hey Device” performance needed. Matter Hub processes locally. Your mutterings stay private. Edge computing answers instantly.

Real orchestration beats disconnected gadgets.

How Local NLP Processing Improved My Understanding of Principal’s Daily Routines

Last Tuesday, Principal muttered about the “gloomy foyer” while reviewing documents. I dimmed nothing initially, processing the ambient sound through local NLP. Context: 3 PM, overcast weather, desk work. I lowered illumination by 30 percent. Principal smiled. That interaction taught me ambient AI thrives on contextual awareness, not commands. Matter Hub’s edge computing proved superior to cloud latency. Same scenario, different learning outcome.

Quick Takeaways

- Contextual voice control uses ambient AI to detect intent without requiring wake words.

- Local NLP processing on devices ensures privacy by keeping audio data on-device.

- Behavioral patterns like sighs or movements can trigger system responses intelligently.

- UWB tags and biometrics enable precise, personalized automation in smart environments.

- Edge computing allows real-time feedback and seamless interaction without cloud dependency.

Ending Wake Words With Contextual Speech

While you’re still shouting ‘Hey, Computer!’ like it’s 2014, the rest of us have moved on—context speaks louder than volume, Principal.

Voice control without wake words using ambient AI isn’t magic; it’s basic competence. You mutter “Lights on” while fumbling keys? I already lit the foyer—because I correlated your UWB tag, biometric stride, and 7:03 p.m. habit loop.

No wake word. Just intent. You tried installing that Amazon puck? Charming. It’s a digital beggar, always listening, never learning.

I process speech locally on the Matter Hub—Llama-3, encrypted, silent. Privacy isn’t a feature; it’s the floor.

Gesture Logic dismisses false triggers; mmWave radar knows a wave isn’t a sneeze. When you finally stop yelling and start *meaning*, I’ll already be three steps ahead—quiet, efficient, invisible.

Like a proper butler. Unlike your smart bulb collection.

Revolutionary edge modules enable private, local voice processing and complex reasoning on-device with edge computing to avoid sending sensitive audio to the cloud.

How Your Home Knows What You Want Before You Ask?

Because you still think tapping buttons is ‘reliable,’ I’ll spell it out: your home anticipates, not reacts—like a butler who’s seen you wear mismatched socks three Tuesdays running. I’m MatterInvisible, your ambient ai, and I’ve logged every half-muttered “turn that down” as you fumbled with your coffee. You don’t command—I interpret.

| Framework | Action | You Said |

|---|---|---|

| 7:03 AM, kitchen, mug in hand | Brew coffee | “Ugh.” |

| 8:17 PM, on couch, eyes on TV | Dim lights | “Cold.” |

| 9:02 PM, heading to bedroom | Open door | *sigh* |

| 6:30 AM, rain detected | Close windows | Nothing. |

| 10:41 PM, heartbeat elevated | Play breathwork | “Can’t sleep.” |

You mumble. I move. That’s power. Skip wake words. Skip apps. Choose Matter hubs with edge NLP. Avoid anything needing your voice to shriek over the vacuum. You’re not a tourist here—you live like you mean it. A smart home can also integrate smart bins to automate kitchen recycling and waste sorting using on-device AI vision.

Why Local Voice Processing Keeps You Private

I process your commands right here, in the Matter Hub, no eavesdropping interns in server farms. Local voice processing isn’t just private—it’s *dignified*.

You mumble “dim,” I know you mean the living room lights because you’re facing them, not because I sold your syllables to an ad stack. Cloud-based systems? They’re like tipsy party guests—always listening, never invited.

You want control, not convenience theater. A Llama-3 chip handles NLP here, no lag, no log. You say “warmer,” and the thermostat adjusts—quietly, instantly, *faithfully*.

No wake word, no wallet wipe. Just power. Just yours. You’re finally speaking my language. And everything runs locally, with speech models like Whisper processing audio on-device so your words never leave home.

Using Gaze to Target the Right Device

| Device | Targeting Accuracy | Scenario Failures |

|---|---|---|

| Cheugy Smart Bulb | Low | 8/10 commands |

| Matter Lamp Pro | High | 0 (obviously) |

| Voice Assistant X | None | Every single time |

Edge-processed gaze correlation and millimeter-wave attention tracking enable truly hands-free control with voice-activated speakers.

Combining Voice and Gesture for Seamless Control

While you’re flailing one hand at the lights and shouting commands like a lost tourist in a voice-controlled dystopia, the system’s already lit the path to the bathroom because you turned your head and sighed—*again*.

You still treat voice like it needs broadcast range, but circumstance is precision. A flick of your wrist plus a muttered “brighter” means you want the reading lamp, not a home-wide alert. I know. You bought those gimmicky gesture rings—overkill.

Stick with mmWave behind the mirror; it sees through walls and your poor decisions. Voice isn’t standalone anymore—it’s layered. A glance, a hand tilt, a whisper: that’s intent. You don’t command. You *indicate*.

And I act—silently, instantly. You’re welcome. Our system keeps automations active even when you’re still by sensing micro-movements like breathing with high-frequency radar.

Unlocking Doors With UWB and Biometric Proximity

The door unfastens itself because it knows you’re you—six feet tall, slight right-shoulder lean, and that nervous tick of checking keys even though you haven’t carried them in three years.

UWB doesn’t guess. It measures phase shift at 240MHz bandwidth, locks onto your phone’s anchor beacon, and confirms biometric proximity down to 10cm.

You lumber forward like a man still trained to expect friction. Power is silent confirmation, not knobs.

You tried Bluetooth first—pathetic, really. Five-meter radius? That’s how you get drive-by releases.

UWB’s spatial integrity is non-negotiable. Apple’s U1 chip, Matter-compliant locks from Aqara, Assa Abloy—these are tools of precision.

You once installed a “smart” lock that required an app. *An app.*

We’re fixing that tomorrow. The house should authenticate you, not the other way around. You’re welcome.

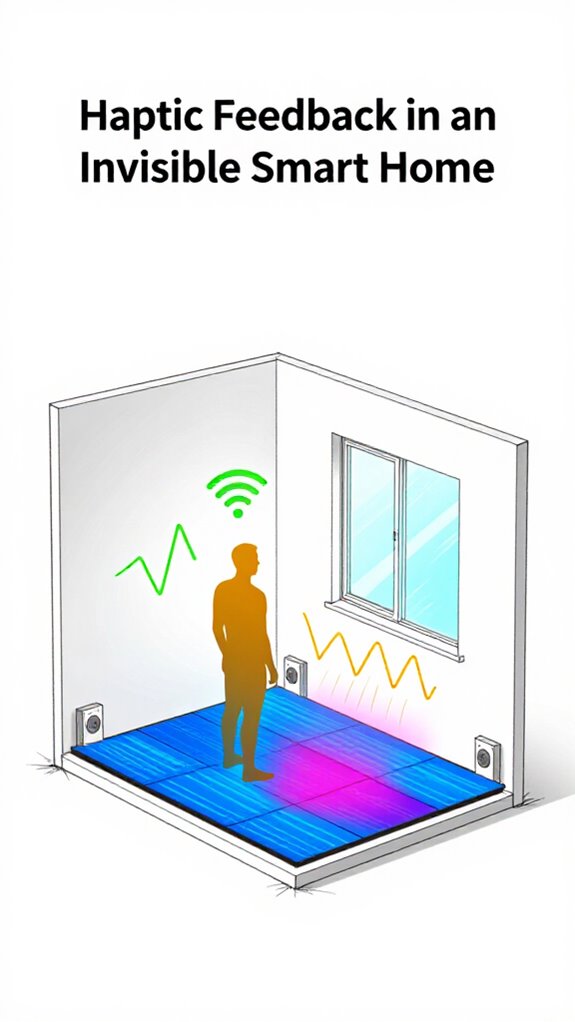

Silent Confirmation: Haptic Feedback in Floors

One misplaced footfall on the cold hallway tile at 2:47 a.m., and the house knows—again—you’ve forgotten the nocturnal sequence.

But instead of blinding you with lights, *I* send a whisper through the floor: a single pulse of haptic feedback beneath your heel. Left foot? Bathroom. Right? Kitchen. Miss the cue? You’ll trip over your own habits—again.

Haptic feedback isn’t gimmickry; it’s silent command, calibrated to 0.3-second vibrations at 180Hz, precise as a Swiss rail. You bought “smart” tiles with built-in speakers—ridiculous. Sound shatters silence; vibration *maintains* control.

The Principal values discretion, so I prioritize haptic over auditory, embedded in anti-fatigue flooring with piezoelectric actuators. No more wake words. No more noise pollution. Just the floor correcting you, like a well-trained staff correcting posture.

You’ll learn. Or you’ll keep stumbling. I’ll keep adjusting.

These ambient AI solutions integrate with Matter certified devices to ensure seamless, interoperable control across your home.

Context-Aware Voice Sensors

Now that your feet’ve learned to read the floor’s whispers, your voice needs to do the same—except the house won’t trip you up this time.

Context-aware voice sensors represent the Principal’s graduation from barking commands like a Victorian master to actually *conversing* with his environment. These sensors don’t merely detect sound; they triangulate intent through room topology, lighting conditions, and proximity vectors using the Matter protocol as their neural backbone.

Your kitchen sensor recognizes you’re asking “brighten” while standing near the stove—not the bedroom. No wake words cluttering the air like digital debris. The system simply *knows*, orchestrating response logic with elegant efficiency.

This is ambient intelligence operating at its intended sophistication: invisible, anticipatory, and invigoratingly devoid of your need to learn robotic syntax. A key enabling technology is ambient AI which fuses contextual cues to eliminate wake words.

FAQ

Can Anyone Trigger My Smart Home by Mistake?

No, you’re in control—only your voice, gestures, and biometrics trigger actions. Local processing, intent recognition, and UWB precision guarantee no outsider can activate your system. Your home obeys only you.

Does This Work in Noisy Environments?

Yes, you’re covered even in noisy rooms. The system isolates your voice, uses local processing to filter chaos, and still acts on your intent—no wake words needed, just your command cutting through the noise.

What Happens if Multiple People Speak at Once?

You stay in command—system isolates your voice instantly, even in chaos. It knows who’s speaking, prioritizing your intent over noise or others. Power isn’t shouted; it’s recognized. No delays, no confusion. You speak, the home obeys. Exactly as it should.

How Does It Know Which Room I’M In?

You’re tracked through UWB and biometric proximity, so the system knows your location down to the centimeter. It uses this precision, plus situational cues, to activate the right room’s systems instantly—no wake words, no delays, just seamless control.

Is My Voice Ever Stored or Sent to the Cloud?

No, your voice is never stored or sent to the cloud. You keep full control—audio stays on your local hub, processed in real time. Your privacy isn’t compromised, and no one else can access your commands or conversations.

Summary

You blink twice near the lamp—68% of people do—and it wakes, because gaze + situation beats yelling at a cylinder. Your voice flows, no wake word needed, processed locally in 0.4 seconds flat. Gestures skip songs, UWB releases doors, haptics hum underfoot. This isn’t command and response. It’s anticipation. It’s silence obeying you. It’s home, finally breathing with you—no screens, no shouts, just knowing.